Managing configurations

Configurations define use-case-specific presets that combine a model with a system prompt and generation parameters. Extension developers reference configurations by identifier in their code.

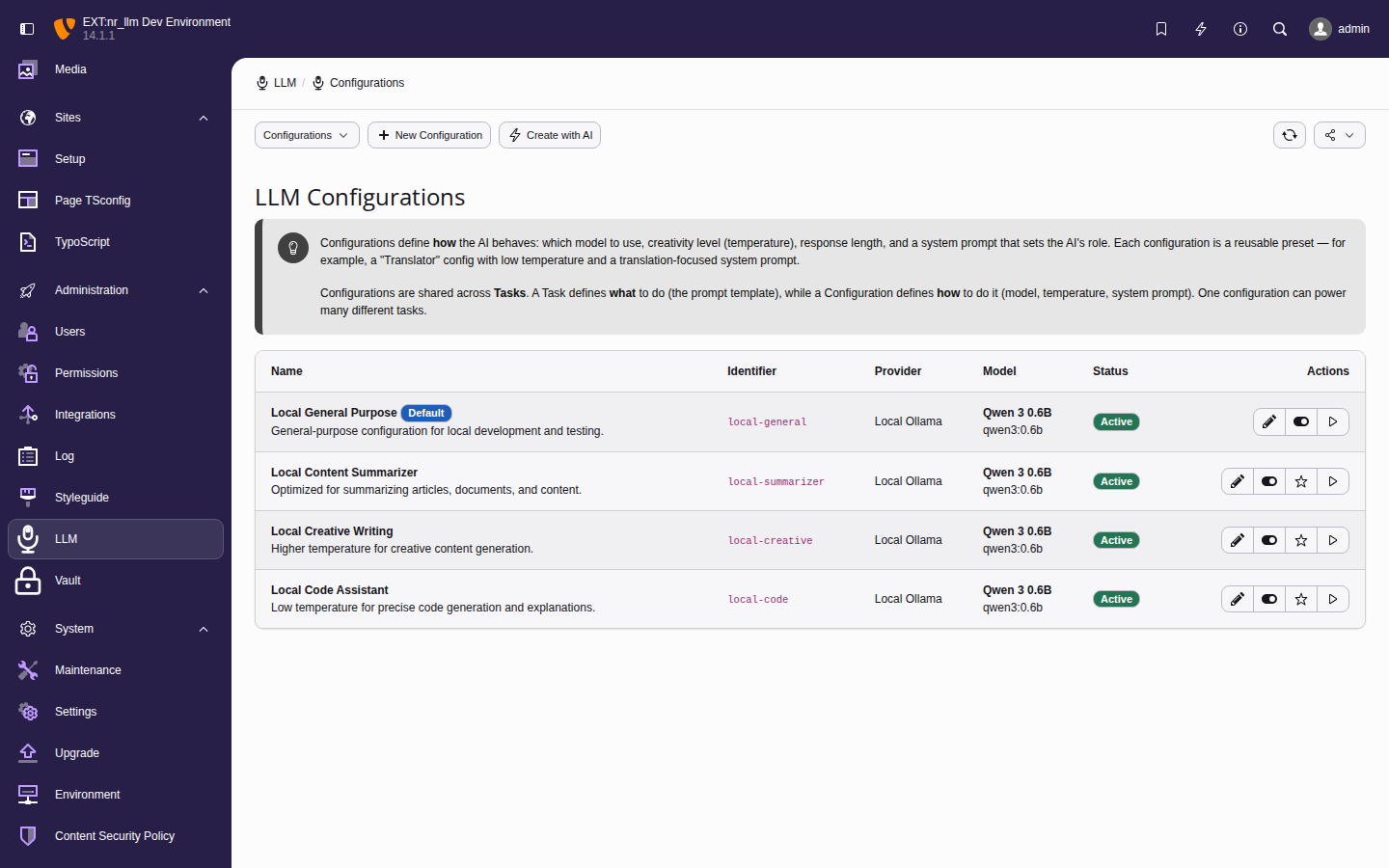

The configuration list showing each entry's linked model, use-case type, and key parameters.

Adding a configuration manually

- Navigate to Admin Tools > LLM > Configurations.

- Click Add Configuration.

-

Fill in the required fields:

- Identifier

- Unique slug for programmatic access

(e.g.,

blog-summarizer). - Name

- Display name (e.g.,

Blog Post Summarizer). - Model

- Select the model to use.

- System Prompt

- The system message that sets the AI's behavior and context.

- Optionally adjust temperature (0.0-2.0), top_p,

frequency/presence penalty, max tokens, and

use-case type (

chat,completion,embedding,translation). - Click Save.

Tip

Use the Configuration wizard to generate all fields from a plain-language description of your use case.

Testing a configuration

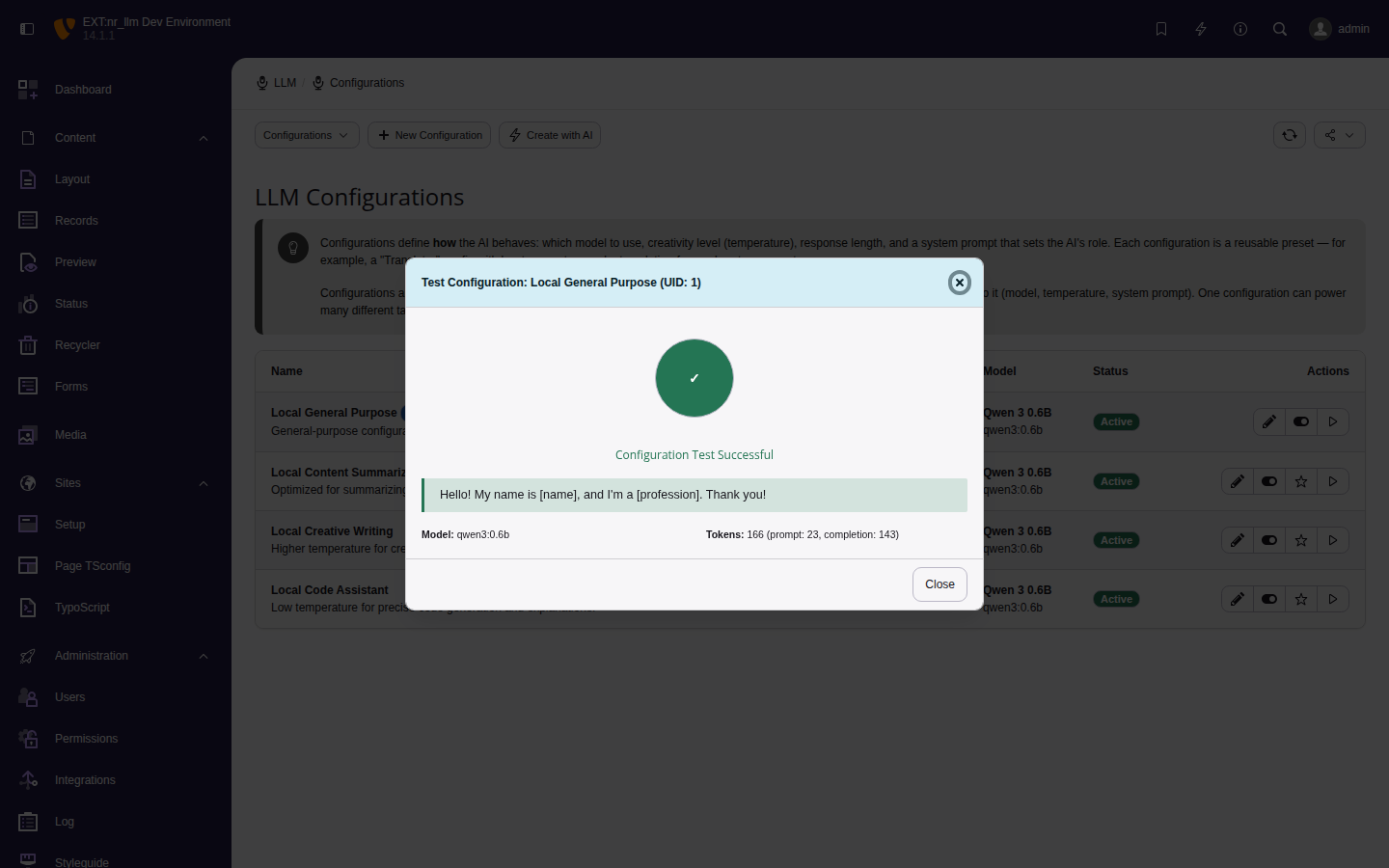

Click Test Configuration on any row. The test sends a short prompt to the model and shows the response, model ID, and token usage.

Successful configuration test with token count.

Editing configurations

Click a configuration row to edit. Changes take effect immediately for any extension code that references this configuration's identifier — no code deployment needed.