Managing providers

Providers represent connections to AI services. Each provider stores an API endpoint, encrypted credentials, and adapter-specific settings.

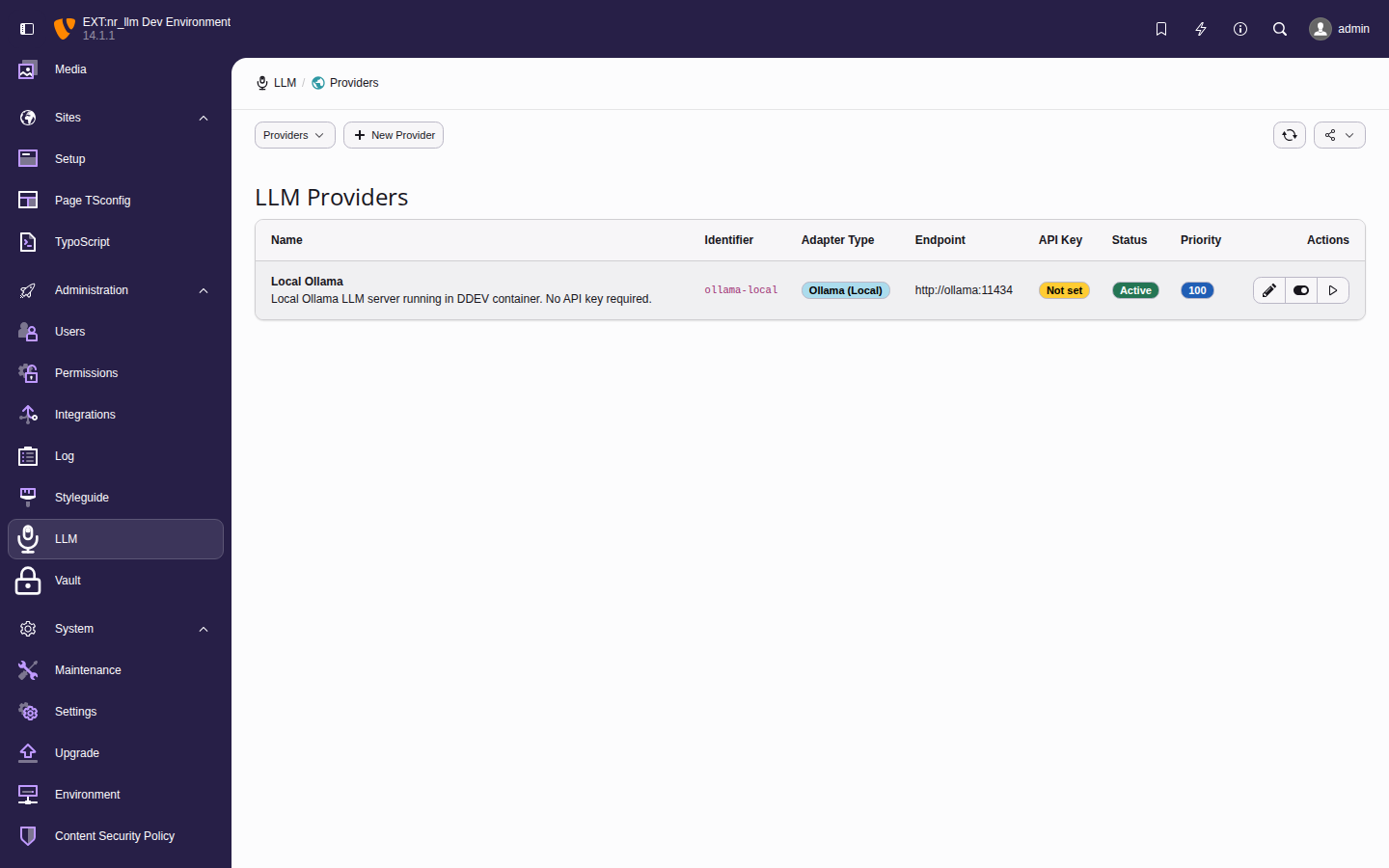

The provider list with connection status indicators and action buttons.

Adding a provider

- Navigate to Admin Tools > LLM > Providers.

- Click Add Provider.

-

Fill in the required fields:

- Identifier

- A unique slug for programmatic access

(e.g.,

openai-prod,ollama-local). - Name

- A display name for the backend

(e.g.,

OpenAI Production). - Adapter Type

- Select the provider protocol. Available

adapters:

openai,anthropic,gemini,ollama,openrouter,mistral,groq,azure_openai,custom. - API Key

- Your API key. Stored securely via nr-vault envelope encryption. Leave empty for local providers like Ollama.

- Optionally set the endpoint URL, organization ID, timeout, and retry count.

- Click Save.

Tip

Use the Setup wizard for guided first-time setup — it auto-detects the provider type from your endpoint URL.

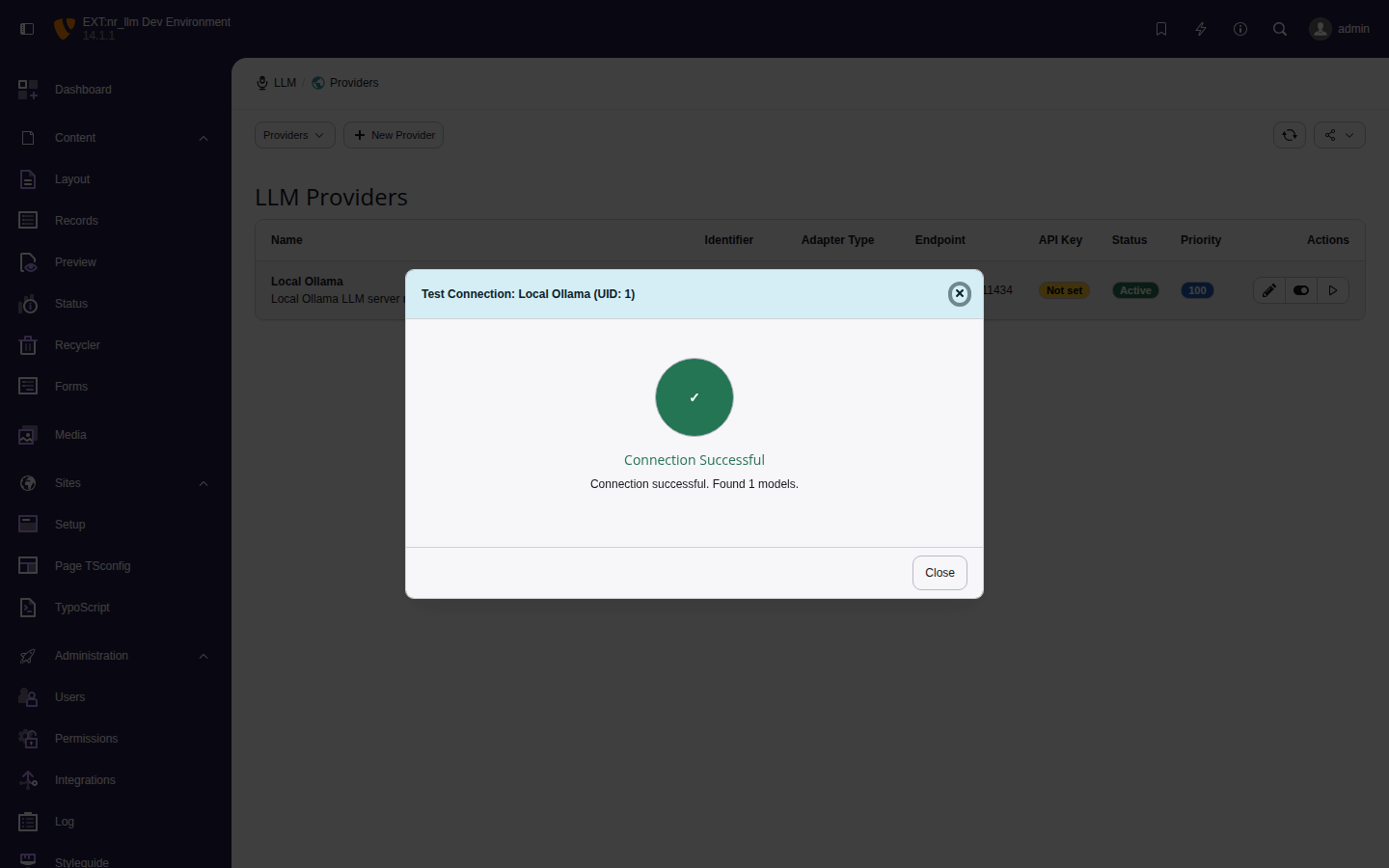

Testing a connection

After saving a provider, click Test Connection to verify the setup. The test makes an HTTP request to the provider API and reports:

- Connection status (success or failure).

- Available models (if the provider supports listing).

- Error details on failure.

Successful connection test for the Local Ollama provider.

Editing and deleting providers

- Click a provider row to edit its settings.

- Use the Delete action to remove a provider. Models linked to a deleted provider become inactive.